The Emotion Machine

A few days ago one of the most eminent roboticists pasted away. Professor Marvin Minsky was a pioneer. He was the cofounder of MIT Artificial Intelligence Laboratory (CSAIL). CSAIL and its members have played a key role in the computer revolution. The Lab’s researchers have been key movers in developments like time-sharing, massively parallel computers, public-key encryption, the mass commercialization of robots, and much of the technology underlying the Artificial Intelligence (AI), robotics, Internet and the World Wide Web.

Prof. Minsky was the inspiration of many young researchers. I was one of them. Part of my PhD thesis is based on their view of how to create a real AI. He suggested that the way to create a machine that imitates human behavior is not by constructing a unified compact theory of AI, like physicists constructed during the XIX and XX century. These last two centuries were very successful for Physics. Scientists discovered Electromagnetism in just four equations or Relativity in just one.

On the contrary, he argues that its really unlikely to find a unified theory of AI, like psychologists and neuroscientists haven't found a unified theory of the human brain. He suggests that our brain contains resources that compete between each other to satisfy different goals at the same moment. He calls this Ways to Think. Here there is a publication that summarizes his theory.

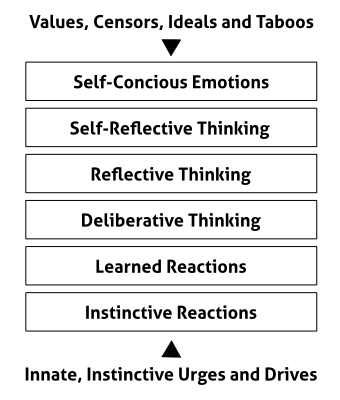

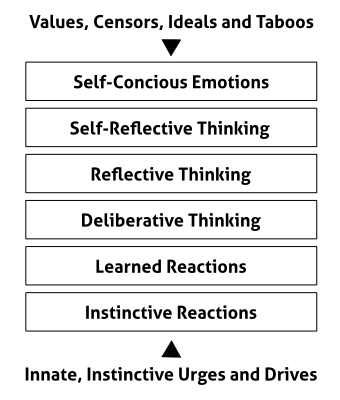

Also, he published a book called The Emotion Machine that, in my personal view, is one of the most important books on Artificial Intelligence of all time. In this book, Minsky proposed a six-level model of the mind. He explained the different levels this way:

Instinctive Reactions: Joan hears a sound and turns her head. All animals are born equipped with ‘instincts’ that help them to survive.

Learned Reactions: She sees a quickly oncoming car. Joan had to learn that conditions like this demand specific ways to react.

Deliberative Thinking: To decide what to say at the meeting, she considers several alternatives, and tries to decide which would be best.

Reflective Thinking: Joan reflects on what she has done. She reacts, not just to things in things in the world, but also to recent events in her brain.

Self-Reflective Thinking: Being “uneasy about arriving late” requires her to keep track of the plans that she’s made for herself.

Self-Conscious Emotions: When asking what her friends think of her, she also asks how her actions concord with ideals she has set for herself.

In his book, Minsky doesn't propose a way to transfer this model to a robot. But he gives us a hint, we could state the problem as a network of different Ways To Think (that would be the actions or behaviors the robot performs) that compete with each other depending on the relative importance inside the model (that would be like weights giving priorities to the actions). This structure is very similar to Artificial Neural Netwoks.

The current state of Artificial Intelligence

We are very far from making robots with emotions following Minsky's model or any other. Certainly we have made amazing advances thanks to Deep Learning. Right now a robot can surpass human performance in face recognition, image recognition or playing the game of Go.

It is really remarkable that we surpassed human performance even though we know for sure that we do not behave that way. In Deep Learning, we train a network with millions or billions of examples, but a human does not need that many examples to learn something. In fact, we are very efficient and need very few examples to learn anything.

The reason is that the human brain is really good at generalizing concepts and making relationships between ideas. This is something no robot is able to accomplish (right now). Therefore, this is a great challenge for AI: how to generalize ideas as the human brain does.

So far we have robots like Pepper that can identify emotions and act in consequence. In fact, I did a couple of years ago an algorithm for the humanoid HOAP-3 that perceives sadness of happiness in a person and reacts accordingly. But these are not real emotions.

To be honest we are really far from making robots intelligent enough to have emotions or a high level of intelligence.

Lately, there has been an intense debate about whether we should create intelligent machines. People like Elon Musk or Stephen Hawking created an open letter proposing to invest more in AI, having in mind that it could be dangerous if we misuse it. This letter was signed by thousands of scientists including myself.

The truth is that it is a problem that we will have far in the future. In the words of one of the top Deep Learning experts, Andrew Ng: "Worrying about evil superintelligence today is like worrying about overpopulation on Mars".