Introduction

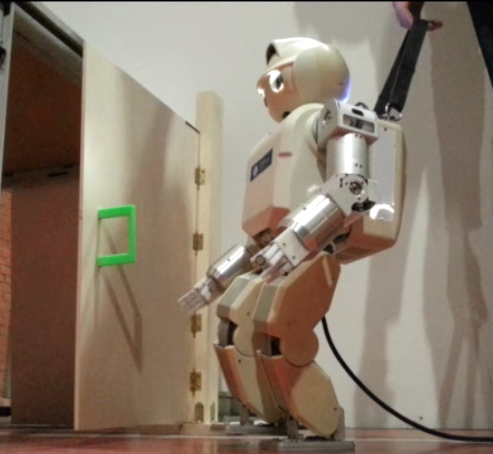

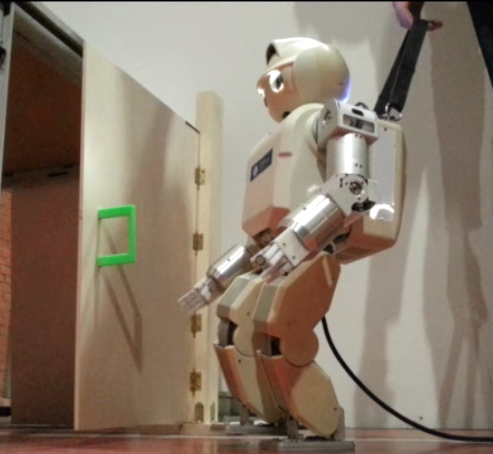

The title of the thesis was Humanoid Robot Control of Complex Postural Tasks based on Learning from Demonstration [1] and it was directed by Prof. Carlos Balaguer, from Universidad Carlos III of Madrid, and Dr. Thrishantha Nanayakkara, from King's College London. Its objective is to make an advancement in the development of complex behaviors in humanoid robots, in order to allow them to share our environment in the future. The experimental justification of this thesis was developed using the humanoid robot HOAP-3.

Human Learning: Imitation and Innovation

It is widely known by psychologists and neuroscientists that imitation learning is one of the first methods toddlers use to develop their skills. Furthermore, there is evidence that justifies that the imitation between humans is goal-directed.

Recent studies show that apes are more suitable to imitate while children show more tendency to over-imitate, in the sense that children make an attempt to improve the optimality of the learned skills. In that sense, skill innovation is, therefore, an essential part of human behavior [2].

Consider a child learning motor skills based on demonstrations performed by his parent. In this case, the problem of relating demonstrations performed by the parent to the child’s own kinematic scale, weight and height, known as the correspondence problem, would be one of the complex challenges that should be solved first. The correspondence problem is one of the crucial problems of imitation and can be stated as the mapping of action sequences between the demonstrator and the imitator.

From that perspective, the child could find a solution that fits his own muscular strength, size, reachable space, and kinematic characteristics which somehow match the level of optimality of demonstrations performed by the parent. This problem can be solved by mapping movements made in a different kinematic scale to a common domain, such as a set of optimal criteria that defines the goal of the action.

Learning from Demonstration and Skill Innovation in Robots

The thesis proposed a novel method for humanoid robots to acquire optimal behaviors based on human demonstrations. We solved the correspondence problem by making comparisons in a common domain, a reward space defined by a multi-objective reward function. The experimental results show advancement in how a humanoid robot can learn to imitate and innovate motor skills from demonstrations of human teachers of larger kinematic structures and different actuator constraints.

Behavior Representation in the reward domain

The representation of the behavior goal is made through a reward function. The shape of the reward functions is selected in accordance with the task. In the case of standing up from a chair, where it is important to maintain stability, the reward value would be high when the robot is in a stable position and would be low when the robot is unstable.

In a similar way, if the task is important to minimize the effort, the reward value would be high when the robot actuators have a small torque (therefore a small effort) and would be low when the torque is high (therefore a great effort). Consequently, the reward function acts as an attractor of the behavior's goal.

The reward function is formed by different components, depending on the objective of the action in every moment. This agrees with the theory of Marvin Minsky, one of the founders of Artificial Intelligence, which proposes that our brain manages different resources that compete with each other to fulfill different goals [3].

Experimental Results

We collected 3D motion data of a group of human subjects performing several behaviors, the first one was a stand-up movement, and the second one was a sequence of behaviors such as walking to a door and open it.

Then we defined a multi-objective reward function as a measurement of the goal optimality for both humans and robots, which is defined in each subtask of the global behavior.

Finally, we optimized a policy to generate whole-body movements for the robot that produces a reward profile that is compared and matched with the human reward profile, producing an imitative behavior. Furthermore, we can search in the proximity of the solution space to improve the reward profile and innovate a new solution, which is more beneficial for the humanoid.

In order to generate the movement, we used a genetic algorithm called Differential Evolution. A genetic algorithm is an optimization method that is used to generate candidate robot movements whose reward is matched to the human's reward. The matching of the reward profiles is performed using Kullback-Liebler divergence.

References

- M. González-Fierro, "Humanoid Robot Control of Complex Postural Tasks based on Learning from Demonstration", Ph.D. thesis, 2014.

- M. Nielsen, I. Mushin, K. Tomaselli and A. Whiten, "Where culture takes hold: overimitation and its flexible deployment in western, aboriginal, and bushmen children", Child development, vol. 85, num. 6, pp. 2169–2184, 2014.

- M. Minsky, "The emotion machine", New York: Pantheon, 2006.